By David Wong

Founder of Claruspon

Preface

The continue expansion of the Cloud and the AI computing drive the change of the datacenter networks, as it requires a set of features than the Clos topology alone can provide, notably the requirements of low congestion, high throughput and good scalability.

In a series of articles, I am about to show you the fundamentals of a leading-edge technology with more desirable networking characteristics than the Clos network alone resulting in more effective network supporting various business goals.

State of the Art and Issues

The 2023 OCP/FTS in San Jose was a great success. I met with many great people (now we are connected on LinkedIn), and to show them the BGP-based non-minimal routing for cloud and AI computing. I’ve also seen many presentations from SONiC Workshop, Expo Hall Stage Presentations, AI, and Networking Tracks.

I want to highlight some thoughts that are not covered in these presentations. So, I’ll be able to jump right in the topic, that I think will help the industry, in the next post. There is a good reference paper [2] from MIT and Meta that I’ll reference to.

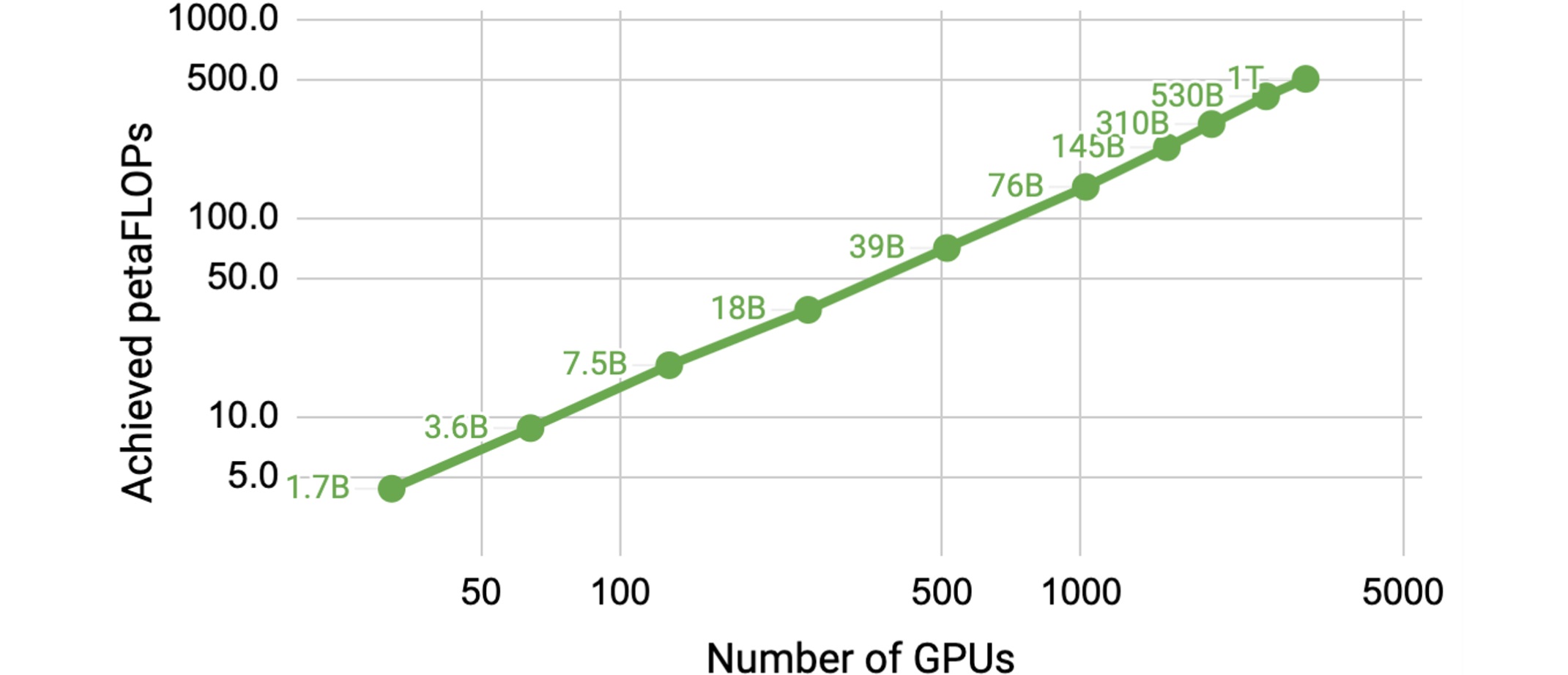

Figure 1 – Achievable petaFLOPs versus Size of GPU Cluster

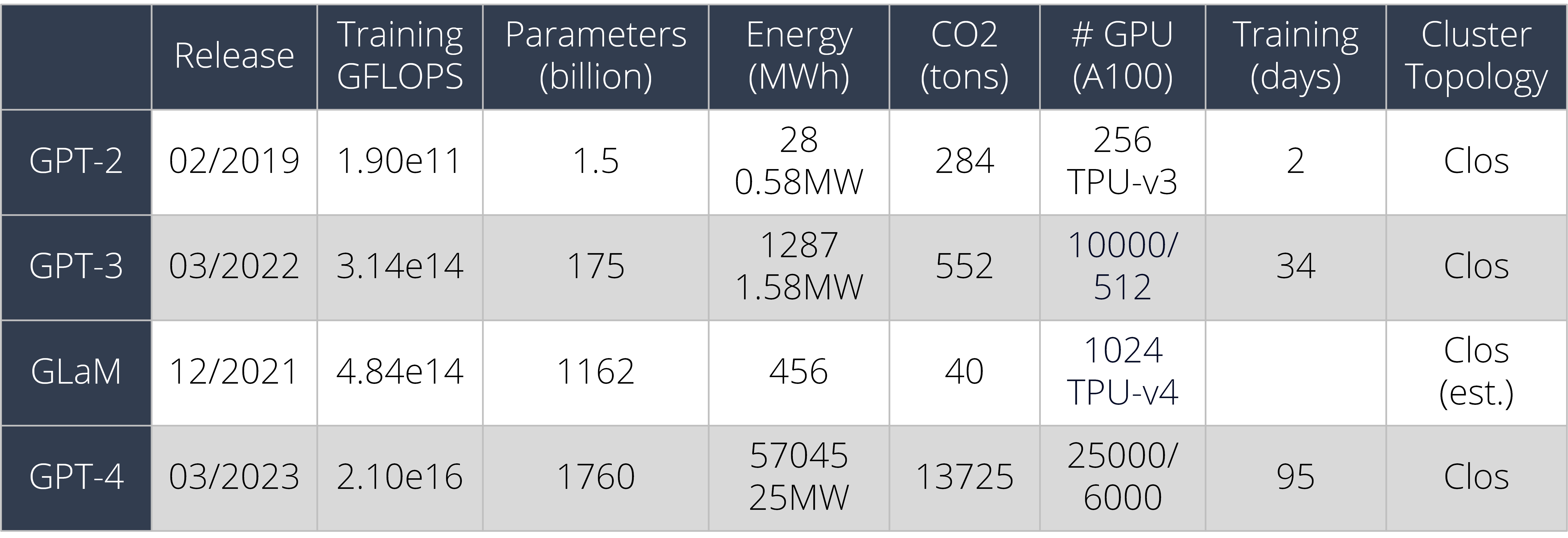

Table 1 – LLM (Large Language Model) Information

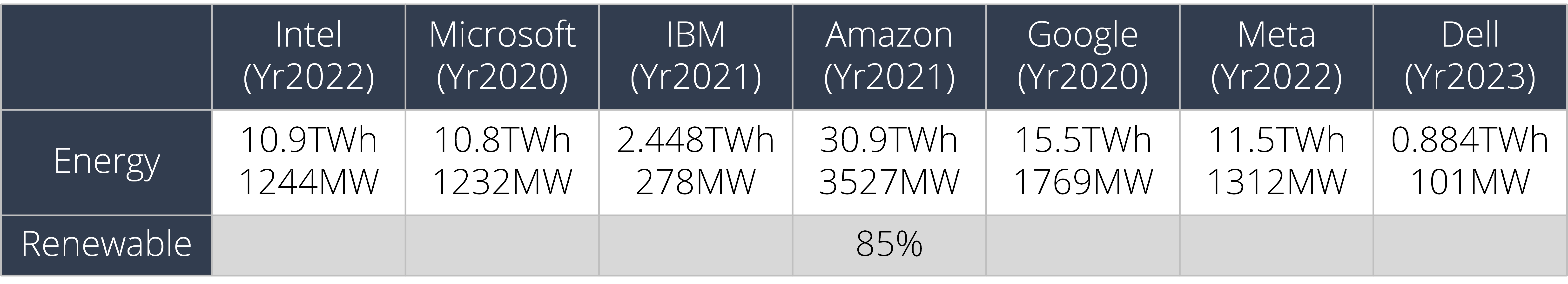

as compare to corporate energy usage per year,

Table 2 – US Corporate Energy Consumption (TWh = Trillion Watt∙hour)

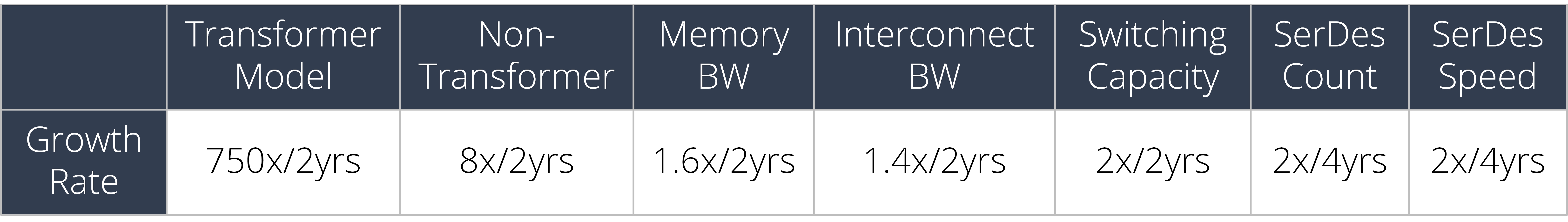

and compared to growth of infrastructure,

Table 3 – Infrastructure Improvement

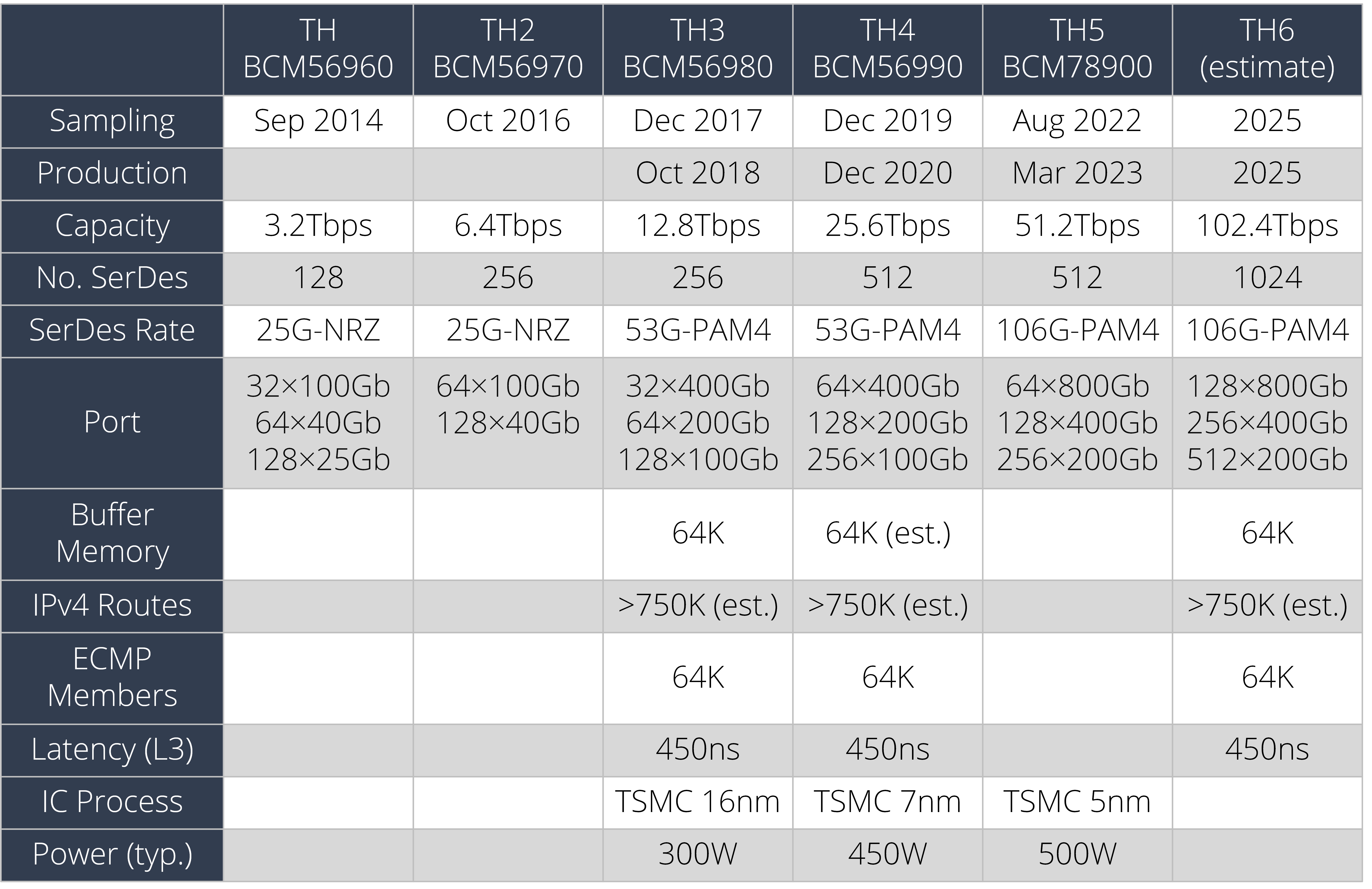

Specifically, the growth rate of commercial datacenter switch,

Table 4 – Broadcom StrataXGS Tomahawk Ethernet Switch Series

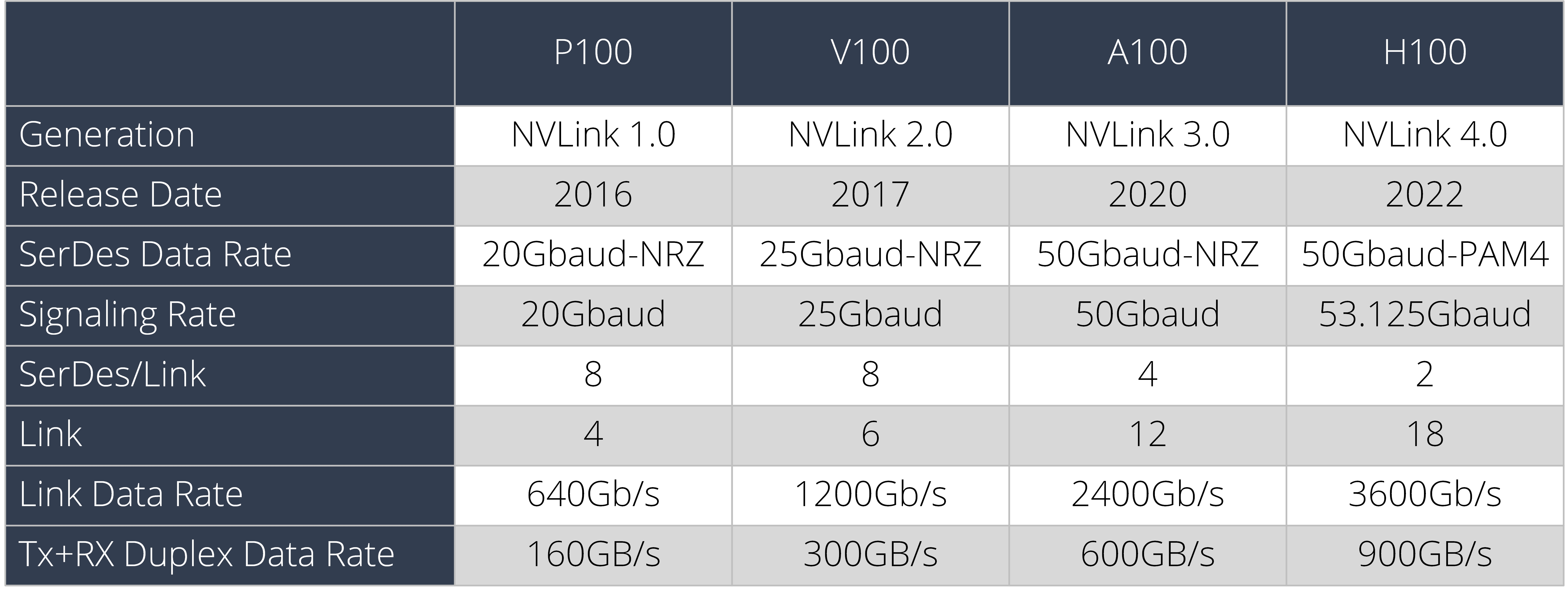

Table 5 – Nvidia GPU Servers

Key Points

-

Model (GLaM, e.g.), parallelization strategy, and computational improvements play a big part in reducing training and inferencing duration and their carbon footprints.

-

Computing in the Cloud rather than on premise improves datacenter energy efficiency, reducing energy costs by a factor of 1.4–2. As of 2021 only 15%-20% of all workloads have moved to the Cloud [3], so there is still plenty of headroom for Cloud growth to replace inefficient on-premise datacenters [1].

-

Infrastructure improvement is TOO SLOW including transceiver industry; expensive turnkey solutions fill the gap for “EACH” major AI model release.

-

Vendor’s business model is hard to change – switching capacity doubles every 2 years; radix count doubles every four years. The speed to scale to a high radix and fully disaggregated network is not keeping up the pace of the AI industry.

-

Network congestion impacts the training duration and correlated to the carbon footprint in a big way.

-

Network topology improvement and routing technology innovation are hard to come by – once every 5 – 10 years. Innovations bring improvements in many aspects – low cost, low congestion, simpler network, quadratic growth of bisection bandwidth & path diversity, fully compatible to Cloud technology, etc.

References

-

David Patterson, Joseph Gonzalez, Urs Hölzle, Quoc Le, Chen Liang, Lluis-Miquel Munguia, Daniel Rothchild, David So, Maud Texier, and Jeff Dean. 2022. The Carbon Footprint of Machine Learning Training Will Plateau, Then Shrink

-

Weiyang Wang, Manya Ghobadi, Kayvon Shakeri, Ying Zhang, Naader Hasani. 2023. Optimized Network Architectures for Large Language Model Training with Billions of Parameters. https://arxiv.org/abs/2307.12169

-

Evans, B. 2021. Amazon Shocker: CEO Jassy Says Cloud Less than 5% of All IT Spending. https://accelerationeconomy.com/cloud/amazon-shocker-ceo-jassy-says-cloud-less-than-5-of-all-it-spending/